The motivation for our work is that rehearsal is a critical component for class-incremental continual learning yet requires a substantial memory budget. W investigate whether we can significantly reduce this memory budget by leveraging unlabeled data from an agent’s environment in a realistic and challenging continual learning paradigm. Specifically, we explore and formalize a novel semi-supervised continual learning (SSCL) setting, where labeled data is scarce yet non-i.i.d. unlabeled data from the agent’s environment is plentiful (visualized below).

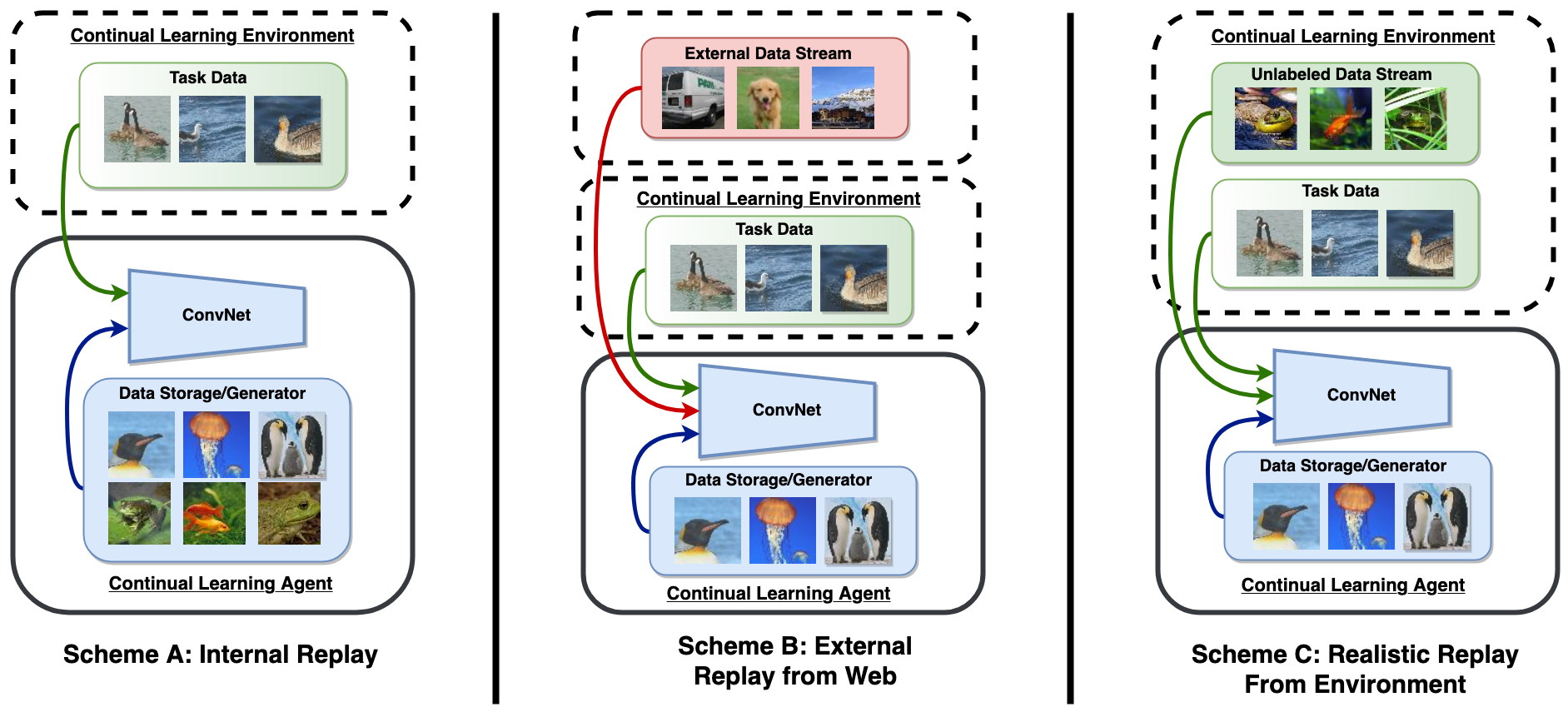

Unlike standard replay (scheme A) which requires a substantial memory budget, we explore the potential of an unlabeled datastream to serve as replay and significantly reduce the required memory budget. Unlike previous work which requires access to an external datastream uncorrelated to the environment (scheme B), we consider the datastream to be a product of the continual learning agent’s environment (scheme C).

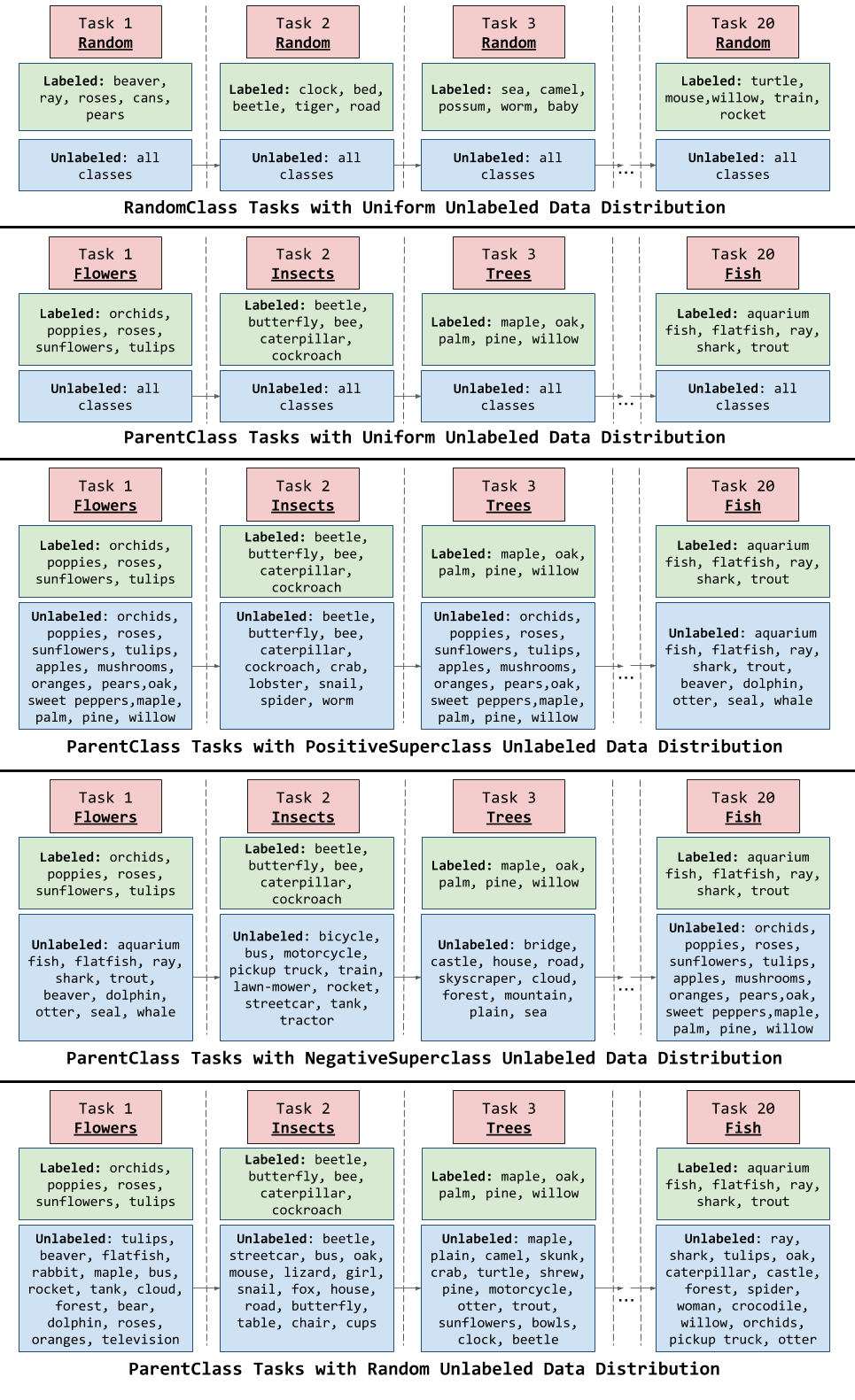

Importantly, data distributions in our SSCL setting are realistic and therefore reflect object class correlations between, and among, the labeled and unlabeled data distributions. The below figure visualizes the types of task data correlations that we investigate in our work, with more details available in the paper.

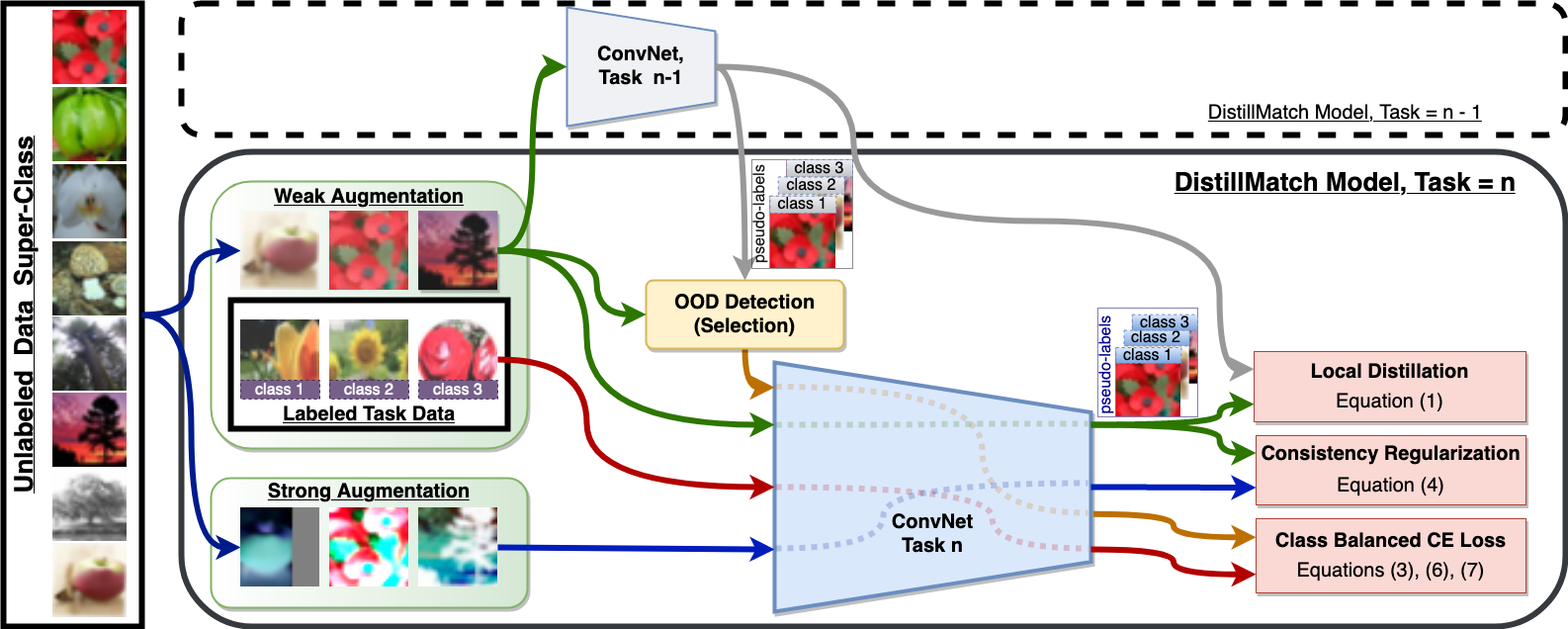

We show that a strategy built on pseudo-labeling, consistency regularization, Out-of-Distribution (OoD) detection, and knowledge distillation reduces forgetting in our setting. Our approach, DistillMatch (visualized below), increases performance over the state-of-the-art by no less than 8.7% average task accuracy and up to a 54.5% increase in average task accuracy in SSCL CIFAR-100 experiments.

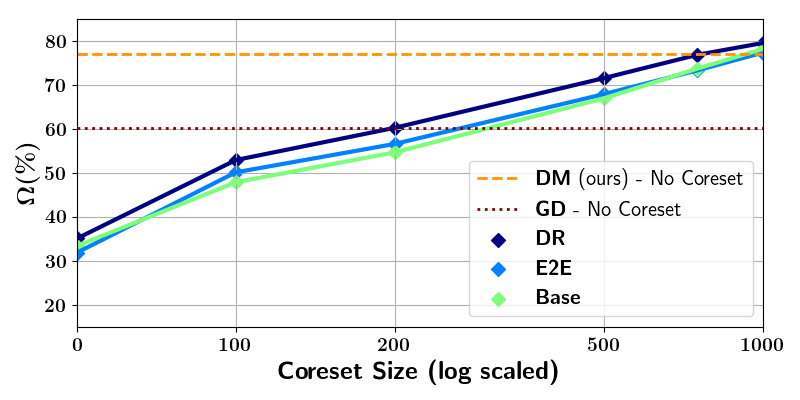

Moreover, we demonstrate that DistillMatch can saveup to 0.23 stored images per processed unlabeled image compared to the next best method which only saves 0.08.

Our results suggest that focusing on realistic correlated distributions is a significantly new perspective, which accentuates the importance of leveraging the world’s structure as a continual learning strategy.